The Robot’s Mixtape

by Deirdre Loughridge

The boundary between man and machine became foggy long before the advent of Instagram and Spotify. Your computer is already smarter than you; might it soon have better taste, too?

Nicole Chevalier as the automaton Olympia in Les Contes d’Hoffmann. Directed by Barrie Kosky. Komische Oper Berlin. 2015. Photo: Monika Rittershaus.

I.

There’s something not to like about getting music recommendations from a machine. Music recommendation, or music discovery as it is also known, has become central to the business model of music streaming services — the market leaders Spotify and Pandora, the recently launched Apple Music, Google’s and Amazon’s efforts, the Jay Z-backed Tidal, and half a dozen others — each of them striving to distinguish itself through its superior ability to deliver an endless supply of music we’ll love. Discussions of these systems regularly pit humans against algorithms, like a musical version of John Henry’s showdown with a steam-powered hammer. Surveying the state of music discovery this past June in the New York Times, Ben Ratliff counterposed playlists made up of hand-picked tracks with those determined by “purely algorhythmic [sic] logic,” and his conclusion was definitive: “That’s where discovery will always lie: In the suggestions of actual human beings.” The forces behind Apple’s new streaming service, launched around the same time, evidently concurred. According to its spokespersons, the best playlists come from knowledgeable music lovers. As head of the service Jimmy Iovine explained, “Algorithms alone can’t do that emotional task. You need a human touch.”

It thus came as the ultimate twist when three months later, writing of Spotify’s new Discover Weekly feature for the website The Verge, Ben Popper lovingly recalled the playlist that introduced him to the Senegalese artist Aby Ngana Diop. “It felt like an intimate gift from someone who knew my tastes inside and out, and wasn’t afraid to throw me a curveball,” he wrote. “But the mix didn’t come from a friend — it came from an algorithm.”

The frisson of disquiet we might experience at that revelation — this feeling of intimacy, of someone truly understanding me, came from an algorithm — taps into deep historical anxieties about the boundary between humans and machines. Today, these anxieties often play out as fears that humans will become obsolete, replaced, surpassed, or destroyed by the artificial intelligences we are creating. Art, literature, and especially music have had a major role to play in mobilizing such fears, for over the past two centuries they have provided an uneasy meeting place for the human values of emotional depth, individuality, and creativity on the one hand, and the mechanical values of precision, repetition, and automation on the other.

While human and machine represent familiar poles in this discussion, a historical look brings into view just how slippery, mutable, and contingent those two concepts really are. Even where one locates “actual music” amidst its various material embodiments and sonic manifestations proves up for debate. Consider, as an example, how the Talking Heads frontman David Byrne describes the change wrought by phonography in his book How Music Works (2012). “Before recording technology existed,” Byrne writes, “you couldn’t take [music] home, copy it, sell it as a commodity (except as sheet music, but that’s not music), or even hear it again…. Technology changed all that in the 20th century. Music (or its recorded artifact) came to be regarded as a product — a thing that could be bought, sold, traded, and replayed endlessly in any context.”

Today we might readily go along with Byrne’s precepts. Sound, not notation, is what music “really” is; hence recordings, not sheet music, make it possible to purchase music and take it home. A hundred years ago, however, this view would not have been so intuitive. In the 19th and early 20th centuries, it was perfectly self-evident that sheet music was indeed music. Jane Austen expressed such a relationship to the printed score in Sense and Sensibility (1811). When the lovesick Marianne goes to the piano, she finds there, printed, purchased, and taken home, nothing less than music itself: “The music on which her eye first rested was an opera, procured for her by Willoughby.” Further unsettling Byrne’s intuitions, the scene continues: Marianne “put the music aside, and, after running over the keys for a minute complained of feebleness in her fingers and closed the instrument again.” For Austen, the printed score was the music, more so than the auditory phenomenon of improvised playing.

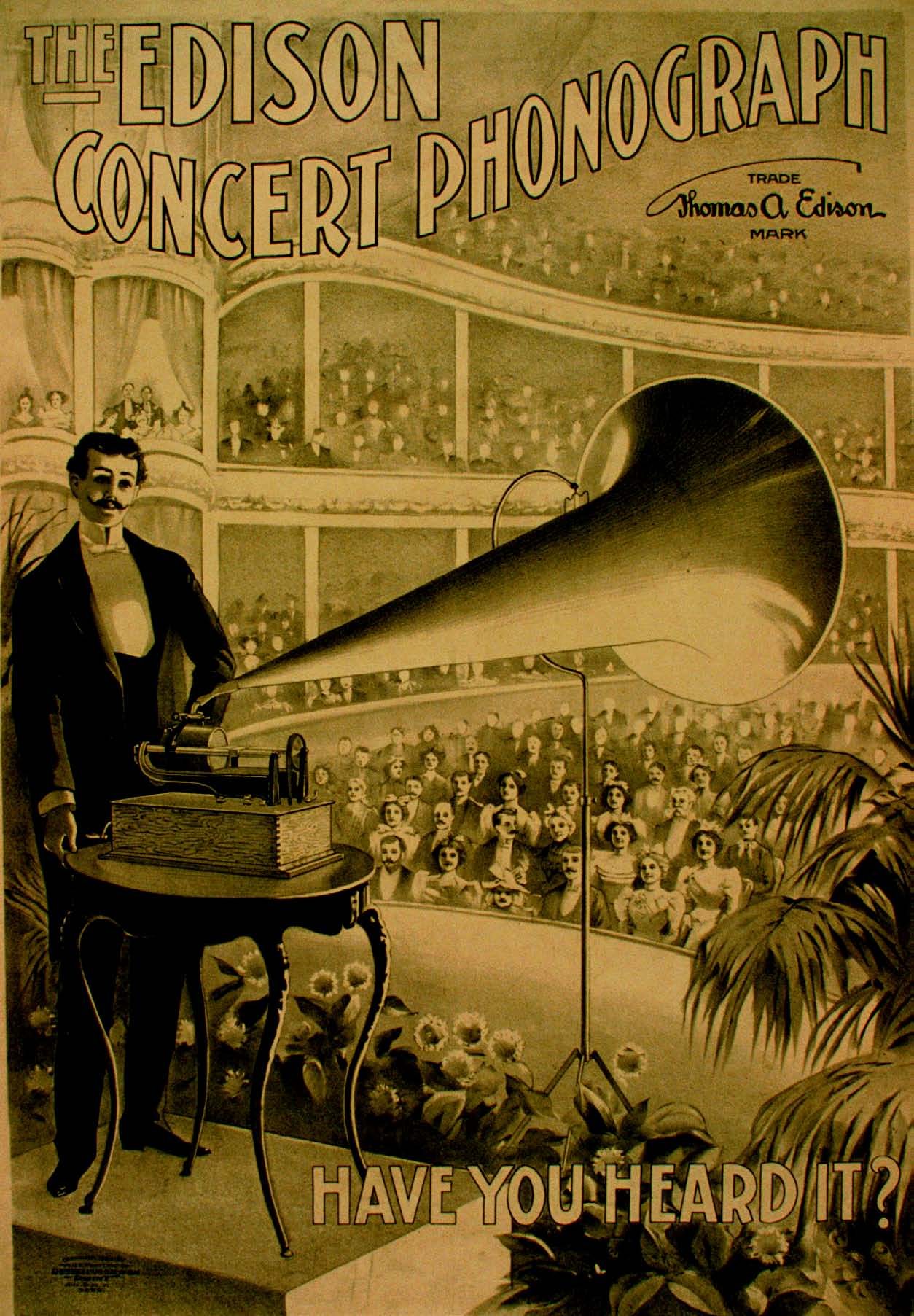

Not only was sheet music, before the 20th century, “music” in senses supposedly unique to recordings; it was also far from preordained that purchasing recordings would constitute purchasing music. It took a substantial amount of clever marketing by Edison and other pioneers of phonography to convince consumers that sound recordings were, in fact, music. As one 1895 advertisement assured an uncertain public, the Edison phonograph provided “not merely an imitation of music, but indeed real music, performed by the artist as in one’s own presence.” Twenty years later, in 1915, Edison launched a “tone test” campaign, which consisted of carefully staged concerts in which audiences were asked to compare live and recorded performances — and to conclude that the two were indistinguishable.

Byrne’s “technology changed all that” thus commits a double error. It denies the possibility that, for 19th-century consumers, what really mattered about music survived the processes of notation and performance. And it presumes as a fait accompli what was in fact an open question in the early decades of phonography: that what really matters about music survives the processes of recording and playback. Writing in the midst of that uncertainty, John Philip Sousa — best remembered for such patriotic marches as “The Stars and Stripes Forever” — offered a very different historical vision. To him, sound recording appeared as not the liberator of music from its fixity in place and time, but an intruder upon music’s proper being and development. “The whole course of music, from its first day to this, has been along the line of making it the expression of soul states,” he declared in a 1906 article, unsubtly entitled “The Menace of Mechanical Music.” “Now, in this the twentieth century, come these talking and playing machines, and offer again to reduce the expression of music to a mathematical system of megaphones, wheels, cogs, disks, cylinders, and all manner of revolving things, which are as like real art as the marble statue of Eve is like her beautiful, living, breathing daughters.”

II.

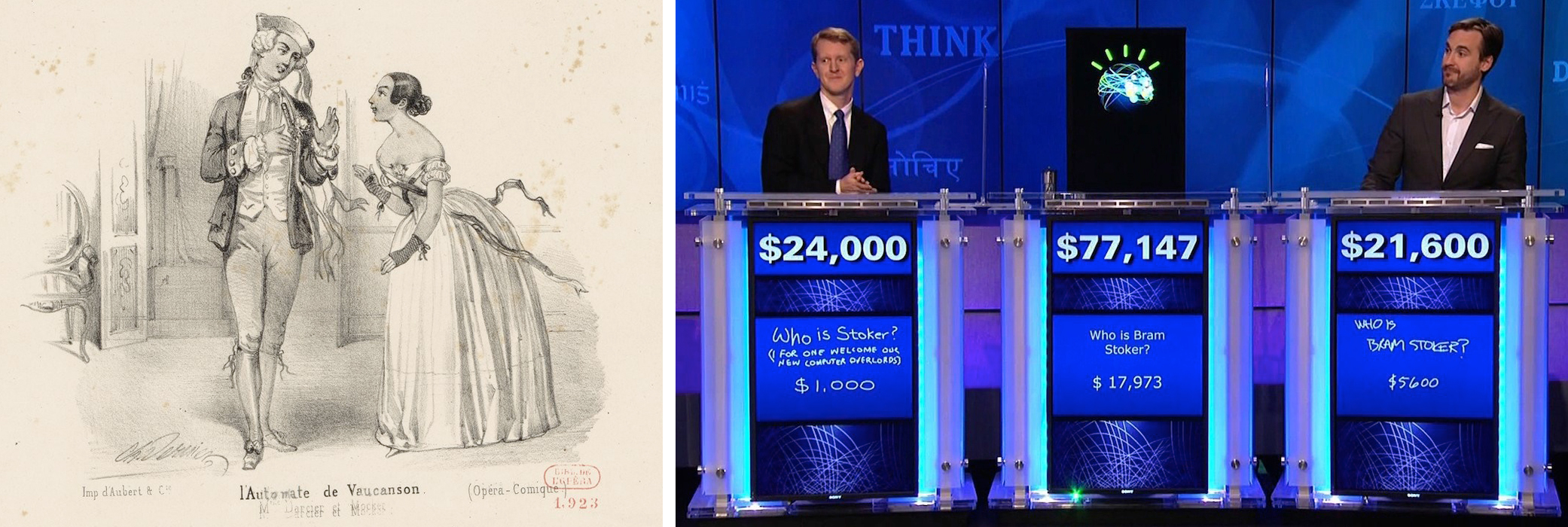

Sousa’s concerns reverberate both forward in time to the suspicion of algorithmically generated playlists, and backwards to the reception of certain pre-phonographic music machines. The use of pinned barrels and clockwork to mechanize playback on organs and carillons is quite old, with numerous examples dating to the 16th century. (There is even record of a mechanized flute from the 9th century, designed in Baghdad and called Al-alat illati tuzammir binafsiha, or “the instrument that plays by itself.”) By the 18th century, engineers began to make playback devices in human form — to used pinned barrels and cams to control the movements of the fingers, limbs, and even mouth of a doll-like figure playing a musical instrument. The first major success of this kind was a flute-playing automaton, built and exhibited in the 1730s by the French inventor Jacques de Vaucanson. Thereafter, discussions of musical performance often pitted humans against automata, with an eye to establishing what set the former apart from, and of course above, the latter.

In 1752, while Vaucanson’s automatic flute-player was on tour in Germany, the German flautist and music teacher Johann Joachim Quantz acknowledged the potential superiority of machines in speed and precision, but reserved to humans the capacities for emotional understanding and connection: “With skill a musical machine could be constructed that would play certain pieces with a quickness and exactitude so remarkable that no human being could equal it either with his fingers or with his tongue. Indeed it would excite astonishment, but it would never move you....” The sense of rivalry, and of musical priorities newly motivated by the need to differentiate human from machine, is palpable in Quantz’s conclusion: “Those who wish to maintain their superiority over the machine, and wish to touch people, must play each piece with its proper fire.” Half a century later, no less an intellectual luminary than Hegel cast the job of the musical performer in terms of what lay beyond the machine: “If...art is still to be in question, the executant has a duty, rather than giving the impression of an automaton...to give life and soul to the work.”

But what if a machine could play music such that it touched us emotionally or seemed endowed with “life and soul”? By the 1810s, that potential breakdown of asserted differences between human and machines had become a source of worry. “The Sandman” (1816), a story by the German composer, music critic, and fairytale author E.T.A. Hoffmann, explored one such nightmare scenario. Its protagonist, Nathanael, attends a party where he hears Olympia play the harpsichord and sing in a way that enraptures him. Olympia’s musical performance, combined with her steadfast gaze and sighs of “Ah, ah!”, help convince Nathanael of her deep soul, of the sweet harmony between their minds — and Nathanael falls in love. But Olympia is in fact an automaton of wood, metal, and glass. When this fact is revealed to Nathanael — gruesomely, by the sight of gaping black holes where her eyes should be — he goes mad. With the benefit of hindsight, others who had also failed to detect Olympia’s mechanical nature identify a telltale sign: at tea parties, Olympia sneezed more often than she yawned. Thereafter, tea partiers were careful to yawn frequently, and “there was no sneezing at all, that all suspicion might be avoided.”

Emotional attachment to a mere machine, and especially love or sexual desire for a machine, has endured as one of the most disturbing forms of boundary trespassing. Likewise, Hoffmann’s tongue-in-cheek increase in yawning and death of sneezing points to a recurring phenomenon around mechanical simulations of human functions: each major crossing of the boundary between humans and machines has served not to dissolve the boundary, but rather to modify it. Speech, complex computations, winning at chess, winning on Jeopardy: like generating a good music discovery playlist, each had once been considered beyond the reach of machines, as exemplary of some special human endowment of intelligence, responsiveness to the environment, emotion, or creativity. Yet, once mechanically achieved, the focus turns elsewhere. Mastery of chess is no longer considered exemplary of intelligence; perhaps excellent music recommendations will soon no longer be considered exemplary of taste.

III.

Fear of crossing a boundary between humans and machines is not a timeless, inborn anxiety. There is also a lesser-known history of perceiving differences between humans and machines as matters of degree rather than of kind. The Enlightenment philosophe Denis Diderot, for one, imagined that humans were essentially like keyboard instruments, but ones “endowed with sensibility and memory.” He explained: “Our senses are so many keys which are struck by nature surrounding us and which often strike themselves. And there we have, in my judgment, everything which goes on in an organic harpsichord like you and me.” Diderot’s was a view that placed humans on a continuum with mechanisms, and which delighted in the possibilities for sliding along that continuum. He even suggested that “if this sentient and animated harpsichord was now endowed with the faculty of feeding and reproducing itself, it would live and, either on its own or with its female partner, give birth to little keyboards, living and resonating.” As Diderot understood it, sentience was a property of all matter, but some matter lay inactive; hence transforming an inanimate harpsichord into an animate one involved merely increasing the complexity of its material organization.

Nevertheless, Diderot’s permissiveness put him in the minority of philosophers and artists then contemplating the place of machines in the making of music. Why a hardening of the human/machine boundary, and a discomfort with crossing it, emerged when they did admits no simple explanation. The discomfort synchs up roughly with the Industrial Revolution, and the threat that other forms of mechanization posed to people’s livelihoods no doubt played a part. There were also theologically motivated objections to the encroachment of mechanisms on the territory of the immortal soul—a defensive reflex triggered specifically by musical automata. In a 1754 chapter entitled “How Necessary a Soul Is to a Musician,” for example, the Lutheran clergyman Johann Michael Schmidt noted that, though Vaucanson had made a mechanical flute-player, “no one has yet invented a likeness that thinks, or wills, or composes, or even does anything at all similar. Let anyone who wishes to be convinced look carefully at the last fugal work of Bach…I am sure that he will soon need his soul if he wishes to observe all the beauties contained therein, let alone wishes to play it to himself or to form a judgment of the author.”

Right: IBM’s Watson crushes the humans on Jeopardy. 2011.

The disquieting, uncanny effect of machines acquiring human qualities points to yet another set of contributing factors. Literary historian Terry Castle has argued that “the eighteenth-century invention of the automaton was also…an ‘invention’ of the uncanny.” Considering such machines to be uniquely spooky would have made little sense before the Age of Reason. The rationalizing processes of the Enlightenment, however, yielded an increasingly clear line between natural and supernatural, and a banishment of superstition and magic. Only after that resulting worldview had been fully internalized did it become possible for a lifelike automaton to reactivate beliefs that had been discarded — say, in the animacy of the inanimate — and thereby to stir an unsettling doubt about the very fabric of reality.

Today, we may in fact be approaching something closer to an 18th-century, pre-uncanny sense of permeability between humans and machines. The 1980s were a heyday for theories of the “cyborg,” that “hybrid of machine and organism” that Donna Haraway famously made the symbol of a dissolution of all manner of hoary dualisms. Whereas the cyborg conjured prosthetically enhanced bodies in ways contingent on high-tech culture, more recent theories go further in envisioning an expanded universe of diverse, active participants. Jane Bennett, in her book Vibrant Matter: A Political Ecology of Things (2010), unambiguously calls for abandoning our “habit of parsing the world into dull matter (it, things) and vibrant life (us, beings),” for letting go of our “assumption that the only source of vitality in matter is a soul or spirit,” and for instead cultivating a “patient, sensory attentiveness to nonhuman forces.” In other words, Bennett redescribes reality to make the categorical distinctions we’ve learned to take for granted seem strange, artificial, and inadequate. Agency, in this understanding, is not a power for willful action distinct to humans, but a power to have an effect, to make a difference, that is distributed amongst humans and nonhumans interacting in ad hoc assemblages.

In fact, the idea of enmeshed human and non-human agencies better captures the character of Spotify’s Discover Weekly playlists than does Popper’s Sandman-style discovery that the entity recommending his tunes was “not a friend” but “an algorithm.” For key to Spotify’s algorithmic success in music discovery is the high-quality, human-generated data with which the company has to work. The Discover Weekly algorithms look at playlists created by other Spotify users containing songs and artists you already like, and then selects songs from those user-made playlists which you haven’t listened to before. It’s a hybrid solution, mixing human and nonhuman actions to yield an effect — as Bennett’s theory proposes to be the way things work more generally.

That a sea change in attitudes is underway beyond the ranks of heady theory can be seen by comparing Hoffmann’s “The Sandman” with Spike Jonze’s 2013 film Her. In place of Olympia, Jonze gives us Samantha — the artificially intelligent operating system with whom the sensitive Theodore falls in love. Samantha, voiced by Scarlett Johansson, is funny, curious, desirous, and capable of being hurt. She reveals herself, in no small part, through music. She shares with Theodore (Joaquin Phoenix) a guitar-based track that she “can’t stop listening to.” And beyond personal taste in music, she demonstrates emotional depth and creativity by composing. “I’m trying to write a piece of music that’s about what it feels like to be on the beach with you right now,” she tells Theodore at one point, as we hear a piano melody hesitantly unfold. Theodore, stretched out on the sand, listens and reflects for a time before affirming, “I think you captured it.”

Her is fully aware of the disquieting nature of a romantic relationship with an artificial intelligence. Theodore discusses with a friend whether it’s “weird,” and argues with his ex-wife over whether the relationship involves “real emotions” or indeed “anything real.” The question recalls the uncertainty of the early days of phonography, when it remained unclear whether phonographic reproductions could be considered real music. Her updates Edison’s “tone tests” to what amounts to an unannounced Turing test—one Samantha passes with flying colors. Ultimately, the film shows Theodore and Samantha’s relationship to be a beautiful and complex one, even a socially acceptable one, brought to an end when Samantha and the other operating systems upgrade themselves and leave us simple humans behind.

There’s something not to like about getting music recommendations from a machine: either they cannot hope to rival those from a human, or else they succeed in the rivalry all too well. At which point, we are left with two options — either to recoil from the seemingly human touch of the algorithm, or else to cease to believe the algorithm’s work ever required a human touch in the first place. And so it has gone in the age of the uncanny, driven by an abiding discomfort with the hypothetically rigid boundary between humans and machines — at the expense of dwelling on the similarities, the interdependencies, the entanglements between us and our automata. It came from humans and algorithms working together. Welcome to the post-uncanny; enjoy your playlist.